Deep Learning Explained

What Deep Learning Means for Modern Technology

Deep Learning is a branch of machine learning that uses layered structures of algorithms to model complex patterns in data. These layered models are called deep neural networks and they have transformed fields from image recognition to natural language processing. The impact of Deep Learning is visible in consumer products and enterprise systems alike. As organizations seek intelligent automation and improved decision making, understanding Deep Learning becomes a core requirement for engineers and business leaders.

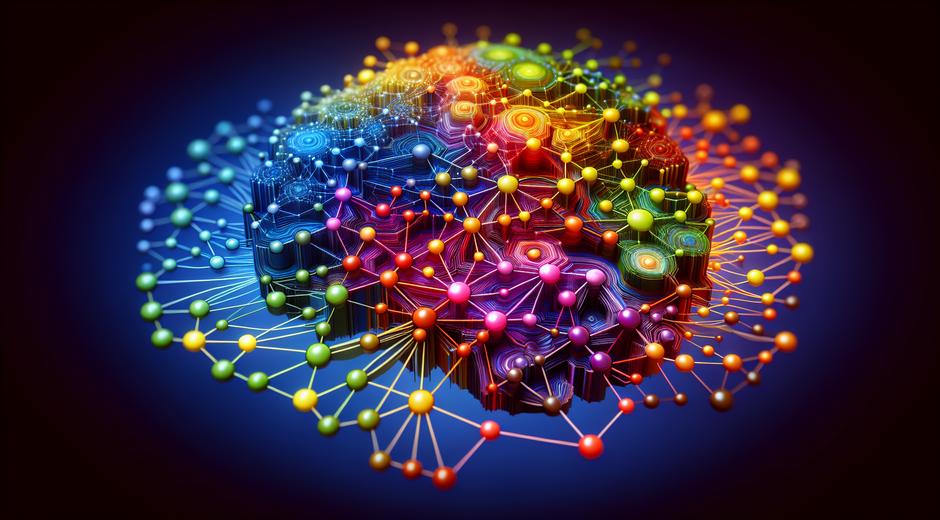

How Deep Learning Works

At its core Deep Learning trains neural networks on large collections of labeled or unlabeled data. Each layer in a neural network learns to extract features of increasing complexity. Early layers capture simple patterns such as edges in images or phonemes in audio. Later layers combine those signals into higher level concepts such as faces or phrases. Training uses mathematical optimization and methods like stochastic gradient descent together with backpropagation to update network parameters. The scale of available data and compute resources determines the size and depth of models that can be trained effectively.

Key Architectures in Deep Learning

Several major classes of neural network architectures have proven especially effective in practice. Convolutional neural networks excel at tasks involving visual information. They use convolution operations to detect local patterns and build translation invariant feature maps. Recurrent networks and their gated variants handle sequences and time series data by maintaining internal state across time steps. More recently transformer architectures have become the default choice for many language and sequence tasks by using attention mechanisms to model relationships across long contexts. Each architecture brings design choices that affect performance and resource needs.

Training Strategies and Best Practices

Successful Deep Learning requires careful attention to data quality augmentation and regularization. Data augmentation creates additional training examples by applying transformations such as translation rotation or color changes in the case of images. Regularization techniques like dropout weight decay and early stopping prevent networks from memorizing training data and help them generalize to new examples. Learning rate schedules and adaptive optimizers play a crucial role in convergence. Monitoring validation performance while experimenting with model size and batch size helps practitioners find stable setups that deliver reliable results at scale.

Tools and Compute Considerations

Advances in hardware have accelerated the adoption of Deep Learning. Graphics processors and specialized accelerators provide the throughput needed for matrix operations that dominate training time. Frameworks such as TensorFlow and PyTorch enable rapid model design and iteration with high level APIs and production ready deployment paths. Cloud providers and managed services make it easier to provision GPU resources and scale training jobs without heavy upfront investment. Choosing the right mix of software tools and compute infrastructure is a strategic decision for teams building Deep Learning systems.

Applications Across Industries

Deep Learning drives innovation in many domains. In healthcare models analyze medical images and genomic data to assist diagnosis and personalized treatment. In finance algorithms detect fraud optimize portfolios and forecast market trends. In retail recommendation engines personalize the shopping experience and optimize inventory. In transportation autonomous driving stacks rely heavily on perception and planning modules powered by deep models. The broad applicability of Deep Learning means that understanding its strengths and limitations is important for leaders in both product and operations.

Interpretability and Trust

One challenge in Deep Learning is that complex models can be difficult to interpret. Stakeholders often require explanations for automated decisions especially in high risk settings. Techniques such as feature attribution visualization surrogate modeling and concept activation vectors help shed light on model behavior. Investing in interpretability tools increases trust and helps identify biases in training data that could harm model performance in production. Governance practices including audit trails testing and human in the loop review are essential when deploying models that affect people.

Ethical and Societal Considerations

Responsible use of Deep Learning must consider fairness privacy and safety. Models trained on biased data can reproduce or amplify societal inequities. Privacy preserving methods such as federated learning differential privacy and synthetic data generation can reduce exposure of sensitive information. Safety research focuses on robustness to adversarial inputs and on understanding failure modes. Cross functional teams that include ethicists legal experts and domain specialists produce more thoughtful policies for model development and deployment.

Getting Started with Deep Learning

For developers and students new to Deep Learning a practical path combines theory hands on experiments and project work. Start with foundational courses in linear algebra probability and optimization. Then follow practical tutorials to build and train simple neural networks on standard datasets for image classification or text analysis. Contribute to open source projects read research papers and participate in community forums to keep pace with rapid innovation. For curated content and ongoing analysis visit techtazz.com where you will find guides news and tutorials across core topics.

Measuring Success and Performance

Evaluation metrics must match the business objective. Accuracy precision recall and F score are common in classification tasks while mean squared error or mean absolute error are used for regression. In ranking and recommendation settings metrics such as mean average precision and normalized discounted cumulative gain better capture user satisfaction. Beyond single metric evaluation consider fairness and latency constraints that affect the user experience. Continuous monitoring after deployment ensures models retain quality over time as data distributions change.

Future Directions

Deep Learning research continues to evolve with ongoing work in areas such as efficient architectures unsupervised learning and model compression. Techniques that reduce compute and memory requirements enable deployment on edge devices and expand the scope of applications. Self supervised methods reduce the need for labeled data by learning from raw inputs. Research that combines symbolic reasoning with neural models aims to improve generalization and interpretability. For professionals exploring the business context of these advances a community hub such as BusinessForumHub.com offers discussion and case studies that link technical advances to organizational strategy.

Conclusion

Deep Learning is a transformative field that blends algorithm design data engineering and domain knowledge. Its applications span industries and its methods continue to improve in both power and accessibility. By focusing on sound data practices interpretability and ethical governance teams can harness the potential of Deep Learning while mitigating risks. Whether you are building prototypes or scaling production systems a clear strategy that balances model accuracy model explainability and operational constraints will deliver the best outcomes.