AI Hardware Guide for Modern Computing

AI Hardware is the foundation that turns advanced algorithms into real world results. From data centers that train large models to small devices that run inference at the edge, the right hardware can change cost time and energy trade offs dramatically. This guide explains core device types key metrics and practical selection tips so you can make confident choices for development and deployment. For a central hub of tech insight and updates visit techtazz.com to explore more guides reviews and analysis.

What is AI Hardware?

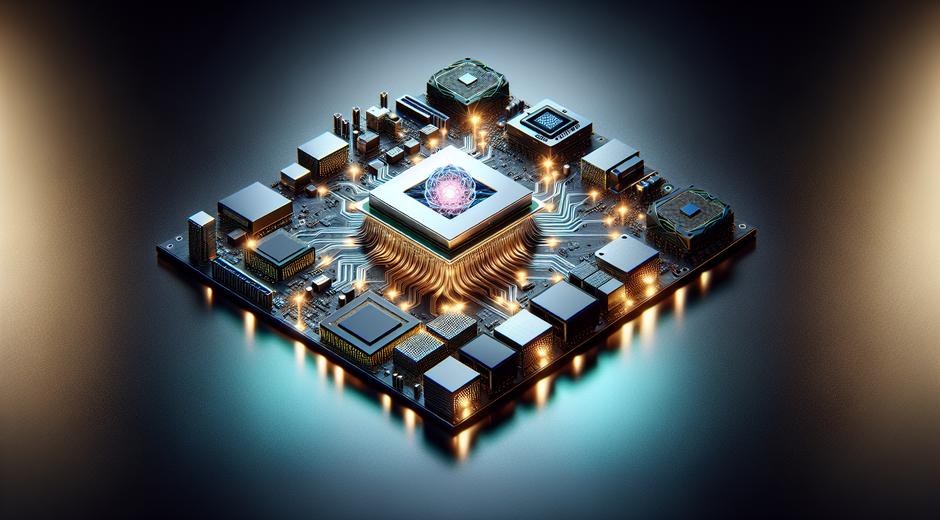

AI Hardware refers to physical compute and memory components built to run artificial intelligence workloads. These workloads include model training and model inference. Training involves heavy compute for optimization and large data movement. Inference involves running a trained model to process new inputs in real time or batch modes. AI Hardware is designed to optimize throughput latency power consumption and model accuracy for these tasks.

While a general purpose processor can run AI tasks the most efficient systems use specialized designs that accelerate matrix math sparse operations and memory access patterns typical of neural networks. The result is faster training reduced energy cost and the ability to deploy intelligent features in constrained environments such as mobile devices sensors and on premise appliances.

Main Types of AI Hardware

Understanding the main categories helps match hardware to workload.

- Graphics processing units or GPUs These are widely used for both training and inference because they offer massively parallel compute and a rich software ecosystem. GPUs excel with dense linear algebra and large batch processing.

- Tensor processing units or TPUs TPUs are accelerators designed for tensor operations common in deep learning. They can deliver high throughput for both training and inference when paired with compatible frameworks.

- Field programmable gate arrays or FPGAs FPGAs provide reconfigurable hardware logic that can be tuned to specific models and latency targets. They are useful when low latency and power efficiency are priorities and when workloads change over time.

- Application specific integrated circuits or ASICs ASICs are custom chips optimized for a narrow set of operations. When volume and lifetime of a product justify cost ASICs can deliver unmatched efficiency.

- Central processing units or CPUs Modern CPUs with vector units can handle many AI tasks especially when models are small or when integration with existing software stacks matters more than raw throughput.

- Neuromorphic chips These chips mimic biological neural networks and aim to deliver very low power consumption for sparse event driven workloads. They are an emerging option for always on devices and certain sensing scenarios.

Key Performance Metrics to Evaluate

Selecting AI Hardware requires an understanding of measurable trade offs. The most important metrics include compute throughput memory bandwidth latency energy use and software compatibility.

- Compute throughput Measured in operations per second this indicates raw capacity for parallel math. For deep learning floating point or integer throughput matters depending on model precision.

- Memory bandwidth Many AI models are limited by the speed of moving data between memory and compute units. Higher bandwidth reduces stalls and improves effective compute usage.

- Latency For real time applications such as voice assistants and autonomous devices low latency is critical. Some hardware focuses on high throughput at the cost of increased latency per request.

- Power efficiency Measured in operations per watt energy use is a chief concern for both cloud providers and edge devices. Efficient hardware cuts operational expense and enables battery powered AI.

- Model support and frameworks Hardware that integrates with major AI frameworks and toolchains reduces development time. Good vendor libraries compilers and profiling tools matter as much as silicon specs.

Software Ecosystem and Optimization

Hardware alone does not deliver peak performance. Compiler support kernel libraries runtime optimizers and model quantization tools are vital. Many vendors supply SDKs that optimize layers memory layout and kernel selection to match hardware traits. Open source libraries also play a role because portability across devices reduces vendor lock in and increases flexibility.

Profiling and benchmarking on representative workloads lets teams identify bottlenecks and tune batch sizes precision and parallelism. Quantization reduces memory and compute demands by moving from high precision to lower precision arithmetic without losing significant accuracy. Pruning and operator fusion are other techniques that improve inference performance and reduce memory needs.

Choosing the Right AI Hardware for Your Project

Answering a few practical questions simplifies decision making. What is the target use case training or inference? Is the workload latency sensitive? What is the available power envelope and budget? How critical is vendor support and software compatibility?

For large scale model training in cloud or on premise clusters GPUs and TPUs often lead due to their throughput and mature toolchains. For inference at scale where cost per prediction matters choosing optimized ASICs or efficient GPUs can yield the best total cost of ownership. For edge devices with strict power and thermal limits neuromorphic or specialized low power accelerators can be ideal.

Proof of concept and pilot programs help validate choices. Running small scale tests with representative data and models identifies real world bottlenecks and helps refine procurement decisions. When vendor demos look promising ask for access to trial units or cloud based test beds to gain performance numbers on your specific workload.

Cost and Scalability Considerations

Total cost includes upfront hardware purchase or cloud consumption licensing and maintenance. In cloud environments pay as you go models simplify scaling but long running training jobs can be more cost effective on owned hardware. Consider amortization of capital expense and the flexibility needed for future model sizes.

Scalability also depends on interconnects and software. Multi node training needs fast links and topology aware software to minimize communication overhead. For inference scaling horizontally often means handling orchestration and load balancing to maintain latency targets.

Trends Shaping the Future of AI Hardware

Innovation is moving fast across multiple fronts. Advances include mixed precision compute that maximizes throughput while preserving accuracy emerging memory technologies that increase bandwidth and density and new chip architectures that reduce data movement. 3D stacking and chiplet designs help increase compute density in compact form factors. Optical and quantum research continues though practical general purpose solutions remain in early phases for most teams.

Another major trend is the rise of dedicated silicon for inference in consumer devices enabling always on features with low energy use. Software driven optimization and model aware compilers will play a growing role so that models and hardware co evolve. This will open new use cases in robotics industrial automation and personalized services.

How to Stay Updated and Where to Find Resources

With hardware technology shifting quickly staying informed helps you exploit new options early. Vendor blogs research papers and performance white papers provide deep insight. Community projects and benchmarking suites offer practical comparisons on similar workloads. For curated news reviews and deep dives that help you compare platforms and vendors explore industry focused resources and marketplaces such as vendor catalogs and expert sites. If you want a recommended resource hub that aggregates new product announcements comparison articles and procurement guides visit Chronostual.com for additional perspective and links to tools and vendors.

Conclusion and Action Steps

AI Hardware is a strategic decision that impacts cost performance and product capabilities. Start with clearly defined goals select representative workloads for testing compare real world numbers and factor in software ecosystem and long term support. Pilot hardware early and be ready to iterate as models grow and new architectures become available.

Adopting the right hardware strategy unlocks faster development shorter time to market and lower operational cost. Use this guide as a framework for evaluation and return to vendor documentation and independent benchmarks as you narrow choices. With careful selection and optimization your AI systems will be positioned to deliver real value for users and customers.